Human, Bot or Cyborg?

Three clues that can tell you if a Twitter user is fake.

Human, Bot or Cyborg?

BANNER: Source: @BlackManTrump / Twitter. Screen grab from December 19, 2016, showing the date the account was created and number of posts

Fake news is one of the biggest challenges facing the modern internet, but it is underpinned by fake social media accounts which spread it. In this article, the DFRLab looks at the clues which can be used to identify the more obvious fake accounts.

One of the key challenges facing bona fide social media users is how to tell who the other bona fide users are. Is this unknown account a human? A fake, automated “bot” account pretending to be human? Or a cyborg, with a human who uses bot technology to help them post faster, longer and more frequently? The challenge applies across all sorts of platforms, including dating apps and online therapy services as well as Twitter and Facebook.

Many of these bots are purely commercial, designed to sell products or attract clicks. Some, however, are political, amplifying false or biased stories in order to influence public opinion. This is one of the purposes of the Russian “troll factory”, with branches in St Petersburg and elsewhere; it was the purpose of the pro-Trump and pro-Clinton bots during the U.S. presidential campaign. Political bots are a key driver in the spread of fake news, amplifying and advertising hoaxes online.

The problem is so pressing that German Chancellor Angela Merkel has called for a debate on how to deal with it, including by regulation, if necessary.

The challenge is huge. According to Emilio Ferrara, research assistant professor of computer science at the University of Southern California, more than 400,000 social bots posted more than 4 million tweets on the US election between 16 September and 21 October. A separate report by researcher Sam Woolley of Oxford University found that “around 19 million bot accounts tweeted in support of either Trump or Clinton in the week leading up to Election day.” Disturbingly, genuine users appeared to find it difficult to distinguish between these fake accounts and humans.

There are various techniques for identifying bots. Users of dating apps can test suspects with awkward questions and sarcasm; users of Facebook and Twitter can analyse the questionable account’s network of followers and relationships. Deeper analysis looks at their behavior over time, linguistic ability and connections with other accounts. Tools such as “Bot or Not” automate such analyses.

The purpose of this article is to suggest a rapid and operational way of identifying suspected political bots on Twitter, without engaging with them, and without technical expertise. It is aimed at the general internet user. The goal is to indicate whether an account is likely to be a political bot. The approach described below is therefore indicative; it should not be taken as absolute proof.

Activity, amplification, anonymity

The simpler political bots share three core characteristics: activity, amplification and anonymity.

The lead characteristic is activity. Political bots exist to promote messages: the higher the volume of tweets they publish, the more messages they can promote.

The first step towards identifying a political bot is therefore to check its level of activity. A visit to any Twitter account’s profile will show when it was created and how often it has tweeted. These two facts can be used to calculate roughly how often it engages online with tweets or likes each day.

It is important to maintain perspective: some genuine people and accounts are highly active for entirely legitimate reasons. For example, as of December 9, 2016, U.S. president-elect Donald Trump (@realDonaldTrump) had tweeted 34,100 times since March 2009, or roughly 12 times a day. UK Twitter personality Katie Hopkins (@KTHopkins) had posted some 49,200 tweets since February 2009, or roughly 17 tweets a day. UK TV personality Scarlett Moffatt (@ScarlettMoffatt) had posted 72,000 tweets and likes since July 2013, at an average of 56 a day. As of December 21, the Reuters news wire (@ReutersWorld) had published 118,000 tweets since July 2011, at a rate of approximately 59 per day.

For the purposes of this analysis, a level of activity on the order of 72 engagements per day over an extended period of months — in human terms, one tweet or like every 10 minutes from 7am to 7pm, every day of the week — will be considered suspicious. Activity on the order of 144 or more engagements per day, even over a shorter period, will be considered highly suspicious. Rates of over 240 tweets a day over an extended period of months will be considered as “hypertweeting” — the equivalent of one post every three minutes for 12 hours at a stretch.

An example is the account @StormBringer15, a pro-Kremlin and pro-Ukrainian separatist account created in July 2014. As of December 19, 2016, this account had posted 225,000 tweets, or an average of roughly 250 per day — more than four times as many as Reuters.

Another is the account @BlackManTrump, a regular amplifier of alt-right messaging in the United States. According to its profile, the account was created in August 2016; by November 14, 2016, when it ceased activity, it had posted 89,900 tweets. Assuming that the account was created on 1 August, this translates to almost 850 posts per day, or one every two minutes, 24 hours a day. (On 13 December 2016, the account resumed sporadic retweeting.)

While it is theoretically possible for a human user to hypertweet like this over a short period of time, it is stretching credibility to suggest they could keep it up for months on end. As such, this level of activity suggests very strongly that the accounts in question are automated bots.

Amplification

Hypertweeting is usually a good indicator that an account is a bot; however, it need not indicate that it is a political bot set up to spread a specific message. Many organizations use bots to automate their posts; online tools such as TweetDeck and HootSuite allow individual users to do the same.

A good example of this is @yuuji_K1. This account is hyperactive, posting over 1 million tweets since March 2014, at a rate of almost 1,000 a day.

But its posts consist of retweets from news organizations, apparently chosen without reference to any particular editorial slant. Some are overtly biased, such as Kremlin outlets RT and Sputnik, but many others are not — for example Reuters and the Associated Press. The emphasis of the output appears to be news, rather than the advocacy of one particular view. As such, while this account is clearly a bot, it does not appear to be a political one, bent on amplifying one point of view.

By contrast, @BlackManTrump is one-sided. Tweets it posted on 14 November included the claim that the “FBI exposes Clinton pedophile Satanic network”, the comment that “Hillary is the Swamp”, and a meme mocking Clinton supporters. Accounts it cited included outspokenly pro-Trump commentators such as Alex Jones and Jared Wyand, and overtly pro-Trump accounts such as @YoungDems4Trump and @TallahForTrump. All the tweets appeared to be retweets of other accounts, with the telltale “RT @” symbol removed.

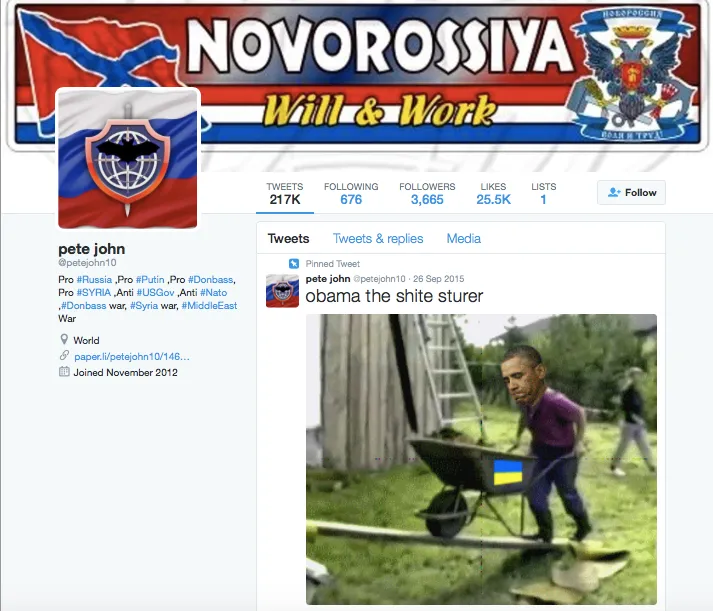

@StormBringer15 also amplifies partisan messages. Unlike @BlackManTrump, it intersperses its retweets with apparently authored comments, but between November 29 and December 19, over 90 percent of the 5,000 tweets it posted were retweets. The great majority were from overtly pro-Russian or anti-Western sites, including the notorious South Front disinformation account, self-proclaimed “pro-Russia media sniper” Marcel Sardo, and the account PeteJohn10, which describes itself as “Pro #Russia ,Pro #Putin ,Pro #Donbass, Pro #SYRIA ,Anti #USGov ,Anti #Nato ,#Donbass war, #Syria war, #MiddleEast War.”

Thus @BlackManTrump appears to be purely automated; @StormBringer15 appears to be heavily automated with a degree of human involvement, a cyborg. Unlike @yuuji_K1, both accounts amplify particular views, rather than broad-based news. Taken together with their inhuman activity levels, this reinforces the impression that they are largely automated political accounts.

Anonymity

A supporting clue to identifying the simpler political bots can be found in their identities, or lack thereof. As a rule of thumb, the more impersonal an account’s handle, screen name, bio and avatar, the more likely it is to be a fake. By contrast, if an account can be definitely linked to a human being, even if it is highly active, it is not a political bot in the sense of this article.

Both @StormBringer15 and @BlackManTrump are anonymous. In each case, the handle, screen name, bio and avatar give no indication of the person behind the account. If social media are a virtual meeting place, these users are wearing virtual masks. Who is the user behind the mask? There is no way of knowing.

Anonymity is a sliding scale. Some likely bots identified by researcher Lawrence Alexander, of investigative journalism group Bellingcat, do not even have coherent handles, but only alphanumeric scrambles: @2KaZTUKLjqWQB7g, @FSoDjw3dx7TBqyx and @xuYvcSWDaal81zy. All were set up in late 2016, tweeted intensively for a few days on the situation in Yemen, amplified the same hashtags repeatedly, and then ceased activity.

In other cases, researchers have identified likely bots because they had a masculine handle but a feminine screen name, or an Arabic screen name but tweeted in Spanish. Immediately after the murder of Russian politician Boris Nemtsov, Alexander and other researchers noticed that a huge number of accounts which had previously tweeted on a variety of subjects all began tweeting about Nemtsov, indicating a network of thousands of bots suddenly turned to a single purpose.

The very simplest bot accounts are sometimes referred to as “eggs”, because they do not have an avatar picture at all, only the egg icon Twitter supplies to new users. An example is French-language account @maralpoutine, which was created in November 2015 and had, by 19 December 2016, posted over 83,000 tweets and likes, or over 200 a day. Despite its age and activity, it still only has an egg for its avatar. The great majority of its posts are retweets, many of them of far-right and pro-Kremlin accounts. The likelihood is that this is a political bot.

At the same time, anonymity alone does not prove the presence of a bot. For example, many parody accounts are technically anonymous, in that they conceal the account holder, but they cannot be viewed as bots. This is true of accounts such as @SovietSergey, which parodies the Russian foreign ministry, @RealDonalDrumpf, which parodies Trump, and @HillaryClinton_, which parodies Hillary Clinton.

Similarly, accounts from conflict zones or countries ruled by repressive regimes, or which report on sensitive information, are often anonymized for reasons of safety, such @Askai707, who documents the conflict in Ukraine, or @EdwardeDark, who posts from Syria.

However, none of these accounts posts on the Reuters scale of activity. @SovietSergey is the busiest, with an average of 30 posts a day since May 2016. The others are much less so, in the range of three to five tweets a day on average.

Anonymity, therefore, is not a conclusive factor in identifying a bot. However, it is a valuable supporting test to perform. The higher the degree of anonymity, the higher the likelihood of a bot.

Conclusion

There are many forms of political bots, and they continue to grow in sophistication. Some bots are crafted to mimic human behavior (such as @arguetron, which debated for hours with alt-right users in the United States); others are managed by human users who inject personal views and interactions into the pattern of activity, masking the degree of automation.

In some accounts, the user is a human who uses automation to amplify their messaging; these should be considered as cyborgs, automated humans, rather than bots. A subset of the cyborgs is the “revenants”, which are repeatedly suspended for breaching Twitter’s rules of behavior, but rapidly respawn and continue their activity.

To identify such accounts requires more technical capability, and more time.

Nonetheless, many of these bot and cyborg accounts do conform to a recognizable pattern: activity, amplification, anonymity. An anonymous account which is inhumanly active and which obsessively amplifies one point of view is likely to be a political bot, rather than a human. Identifying such bots is the first step towards defeating them.