Facebook pages used bait-and-switch to exploit sympathies for Ukraine war

DFRLab found emotionally charged Facebook posts about the Ukraine war were later edited to promote clickbait articles.

Facebook pages used bait-and-switch to exploit sympathies for Ukraine war

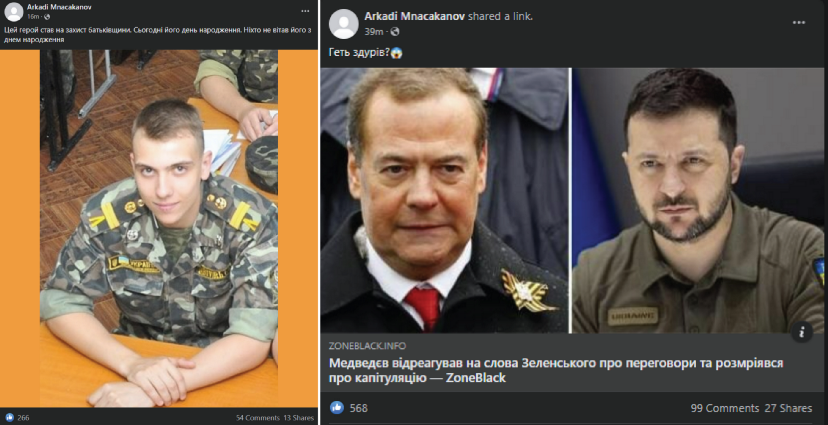

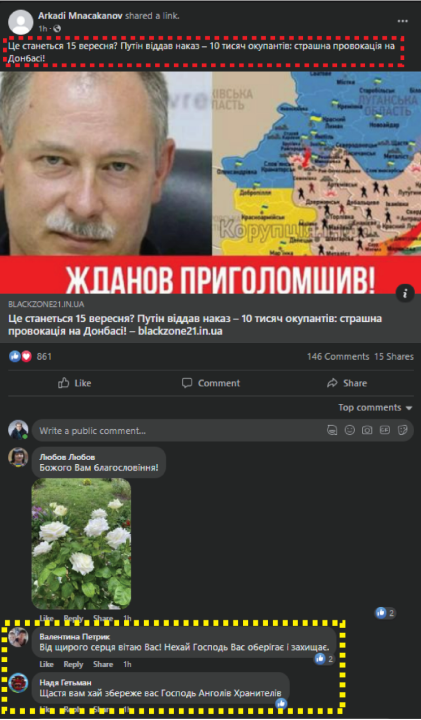

BANNER: Facebook pages and groups involved in the scheme would post inspiring stories, like the celebration of a Ukrainian soldier’s birthday (left), and replace it with clickbait news coverage (right) once the original post garnered a critical mass of engagement. (Source: Facebook)

Facebook pages with links to Armenia are engaging in a bait-and-switch tactic, exploiting sympathies for the Ukraine war to boost engagement on posts promoting fringe websites. The tactic involves sharing an emotionally charged Facebook post about the war in Ukraine to drive up engagement, then editing the posts to promote clickbait articles from fringe websites. The DFRLab identified thirty-one pages using this tactic, of which nineteen have links to Armenia. Some of the pages have hundreds of thousands of followers.

The DFRLab previously reported on a similar network that used the same tactic and was also administered in Armenia. The latest iteration identified by the DFRLab includes two separate operations. The first group involves ten Facebook pages, all of which have administrators located in Armenia. The second group involves ten Facebook pages, nine of which have at least one administrator in Armenia, four have at least one administrator in Ukraine, and eight have an administrator with a hidden location. The fringe websites that each group amplifies are connected via advertising tracking software, like Google Analytics and MGID code, a unique identifier associated with an advertising platform that is similar to Google Analytics.

The two operations employed similar tactics to lure engagement and users, but utilized different assets. The first network was connected by nine fringe websites that shared a Google Analytics code, and included ten Facebook pages, two groups, and at least four accounts. The second network involved ten websites connected via MGID code, ten Facebook pages, five groups, and at least six accounts spreading content in the groups. The content promoted by each group mostly differed from each other, but at times they reshared the same content or posted similar bait-and-switch posts.

Sloppy fringe websites

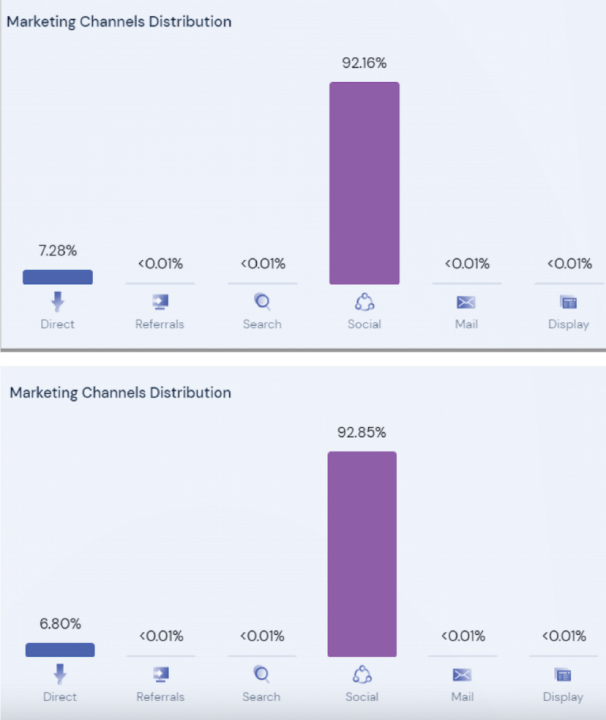

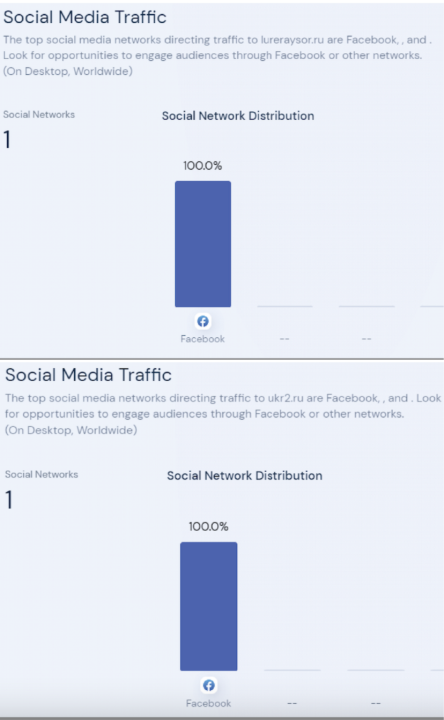

The first operation identified by the DFRLab utilized nine websites with different registrars, but connected via a shared Google Analytics ID. Most of the websites were built on WordPress and included sloppy mistakes, indicating the websites were created carelessly. For example, websites that published Ukrainian content did not delete text left over from the English template, nor did they translate menu items from English. Facebook was the largest driver of traffic to the websites, according to SimilarWeb analytics software.

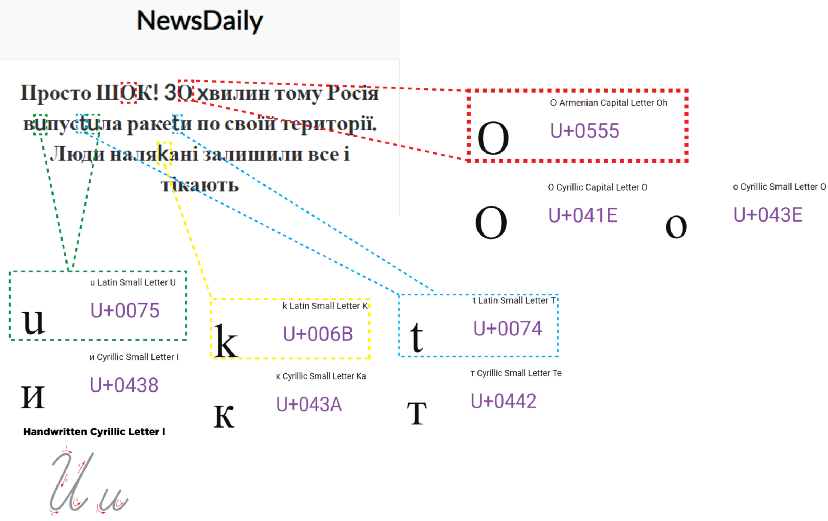

The websites exploit the war in Ukraine by publishing stories intended to elicit a strong emotional response. These articles focus on tragic, inspirational, or infuriating stories about the war. The websites also publish health and lifestyle articles. The content of the articles was usually taken from other sources without attribution and published with a clickbait headline. For example, an article about a July explosion in Belgorod, Russia was titled, “Just shock. 30 minutes ago, Russia launched missiles on its territory. People are afraid, left everything behind, and fleeing.” The text of the article is only forty-seven words and simply recycles the article headline. Embedded in the story is a YouTube video from Radio Free Europe/Radio Liberty; however, the video was published in 2018. In addition, the DFRLab reviewed the Unicode characters in the headline to confirm that the Armenian letter ‘O’ is used, as opposed to the Ukrainian Cyrillic ‘O.” In addition, Latin characters are used as substitutes for other Cyrillic letters.

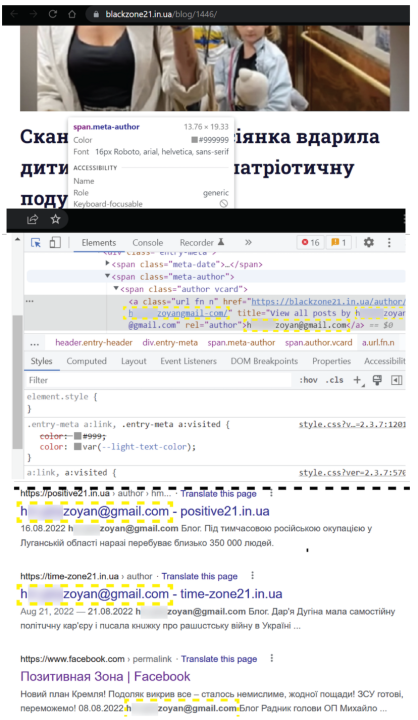

The second group of websites is connected via MGID code. The network also uses WordPress and Latin characters in place of Cyrillic characters. The DFRLab found that at least two websites published the administrator’s email address in the source code of the website, attached to the section designated for the article’s byline.

Audience building

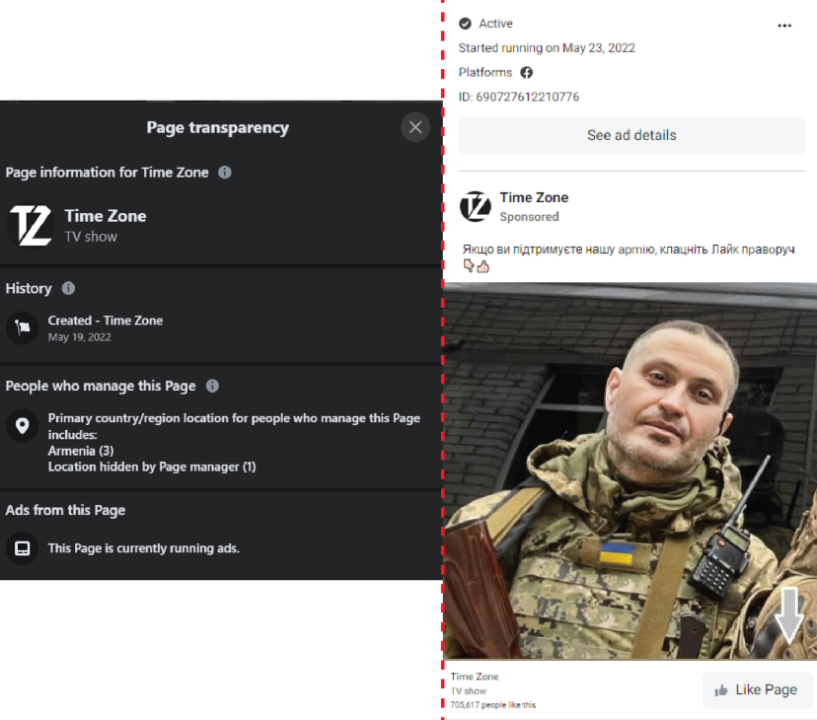

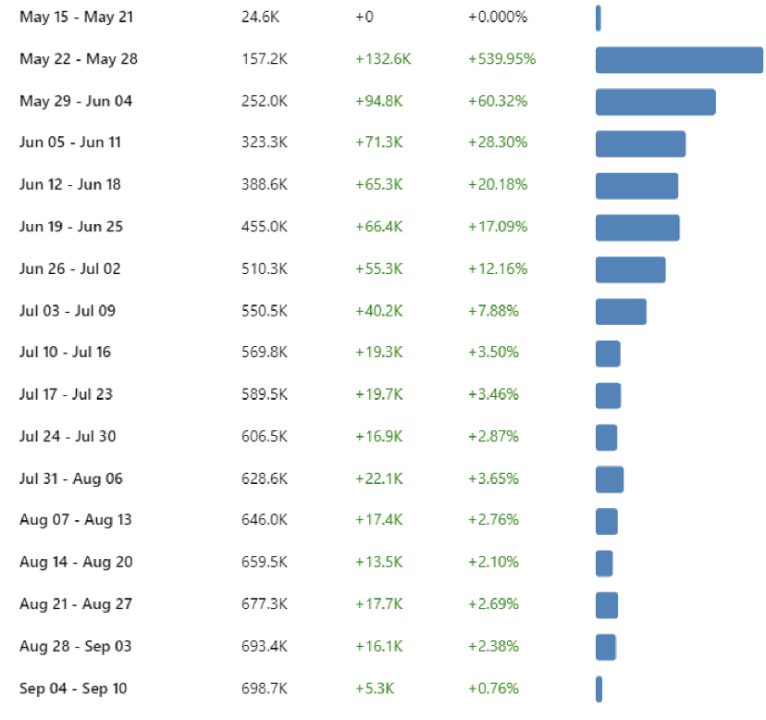

The fringe websites in the operation were promoted by at least twenty Facebook pages identified by the DFRLab. It appears that certain Facebook pages were allocated certain websites to promote, as each Facebook page would almost exclusively focus on promoting two or three websites from the larger set. At times, though, various pages simultaneously promoted the same website. The Facebook pages promoting the domains in the network exploited the war in Ukraine to build their audience via advertisements. For example, the Facebook page Time Zone, created on May 19, 2022, created an ad with a picture of Akhtem Seitablaev, a Ukrainian actor and director who joined the Ukrainian forces after the Russian invasion. The ad shows Seitablaev in uniform and reads, “If you support our army, click Like on the right.” The ad ran from May 23 to at least September 9. Three of the page’s administrators reside in Armenia, while the fourth administrator’s location is hidden. At the time of writing, the page had 705,617 likes. The page saw a dramatic rise in followers beginning the week of May 22, according to CrowdTangle. As the audience growth and ad creation align, it can be inferred that the ad was successful in partially helping the page to achieve a large audience, despite posting low quality content.

Bait-and-switch

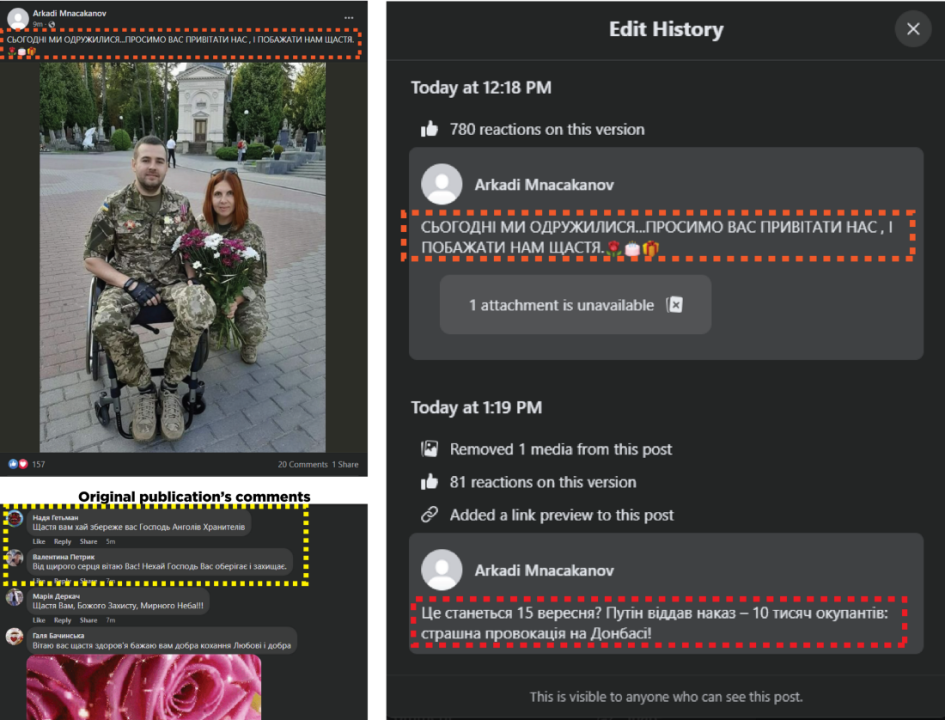

Both of the operations identified by the DFRLab sought to manipulate engagement on their posts through bait-and-switch tactics. This involves an account creating an emotionally-charged post in a Facebook group in order to solicit engagement and comments, followed by the post’s author editing its content and linking to a clickbait article. The high amount of initial engagement helps boost the edited post. Then, the post is shared on Facebook pages, where users cannot see that it was edited without going directly to the group where the post was made.

The first network identified by the DFRLab used the groups Жизненные цитаты (“Life’s Stories”) and Психология отношений (“Relationship psychology”) for their operation. The second network used two sets of groups with identical names; two groups shared the name Найди свой фильм (“Find your movie”), while two other groups were named Омар Хаям (“Omar Khayyam”). A fifth group was named Новые сериалы 2022 (“New series 2022”).

In one example of the bait-and-switch tactic, at least three accounts published posts in the Life’s Stories Facebook group about a Ukrainian soldier’s birthday, soliciting birthday wishes. In another example, a post made in the Omar Khayyam group described the wedding of two soldiers. The posts received several comments wishing the soldier a happy birthday or congratulating the couple on their marriage. Shortly after the original post was made, about thirty to fifty minutes later, the author edited the post to replace the original content with a clickbait article linking to a fringe website. The networks seek to take advantage of people’s sympathy for Ukraine and the respect held for soldiers fighting against Russia in order to grow their audience.

While the DFRLab could not confirm that the two Armenia-linked groups are working in coordination, they are using the same tactics and, at times, sharing the same captions and articles. The article that was switched into the marriage post on the Omar Khayyam Facebook group — belonging to the second operation — was also shared in the Life’s Stories Facebook group, belonging to the first operation. In another example, the Life’s Stories group replicated the marriage post, and then edited it to point to a different article.

A composite image showing the edit history (top left) and the final article (bottom left) in the Omar Khayyam Facebook group; the edit history of a separate post in the Life’s Stories group (top right), and the same article reused in the Life’s stories group (bottom right). The recycled caption is highlighted in red, and the recycled article is highlighted in yellow. (Sources: Facebook)

The DFRLab cannot confirm the motivations behind the two operations, but they appear to be taking advantage of people’s emotional response to the war in Ukraine in order to manipulate engagement on posts and boost traffic to fringe websites.

Special thanks to Igor Rozkladaj for the initial tip on the number of pages disseminating similar stories.

Cite this case study:

Roman Osadchuk, “Facebook pages used bait-and-switch to exploit sympathies for Ukraine war,” Digital Forensic Research Lab (DFRLab), November 2, 2022, https://medium.com/dfrlab/facebook-pages-used-bait-and-switch-to-exploit-sympathies-for-ukraine-war-ce66ce1eaf80.