Georgian travel company behind inauthentic network promotes health-related clickbait content

Inauthentic network disseminated unreliable health-related content to promote travel company and increase sales

Georgian travel company behind inauthentic network promotes health-related clickbait content

Share this story

The DFRLab identified a cross-platform network targeting Georgian Facebook users with health-related disinformation and clickbait content. The network comprised seventy-one websites, twenty-nine Facebook pages, nineteen groups, and fifty-two accounts. The DFRLab linked the network to Avia.ge, a travel company that sells tours and flight tickets. The company attempted to attract audiences through clickbait articles spreading health disinformation, on which the users would be exposed to Avia.ge advertisements. The articles pushed health information that lacked supporting evidence, including alternative treatment options, thus creating risks to those who would follow such dubious health advice.

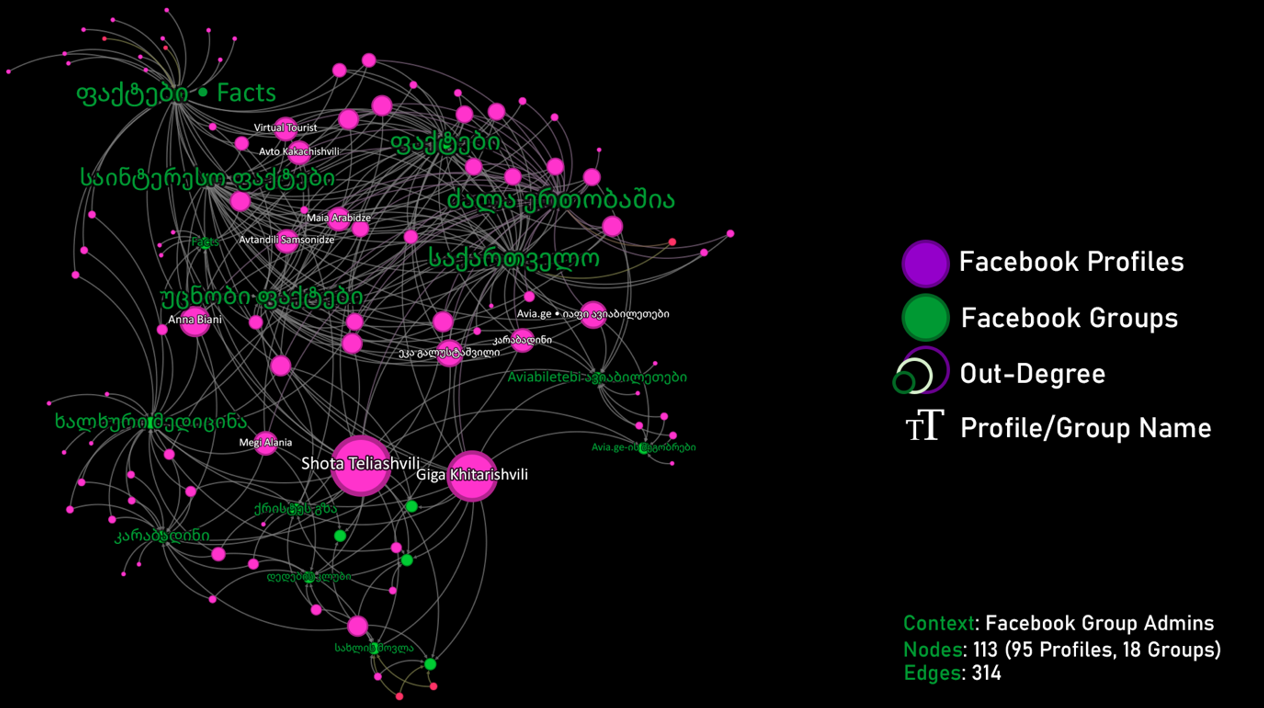

The travel company appears to be legitimate and has been operating in the Georgian market since 2018. According to an excerpt from the Georgian Public Registry, the director – as well as the sole shareholder of the company – is Shota Teliashvili, to whom the assets making up this network, together with the associated websites, are connected.

Facebook pages

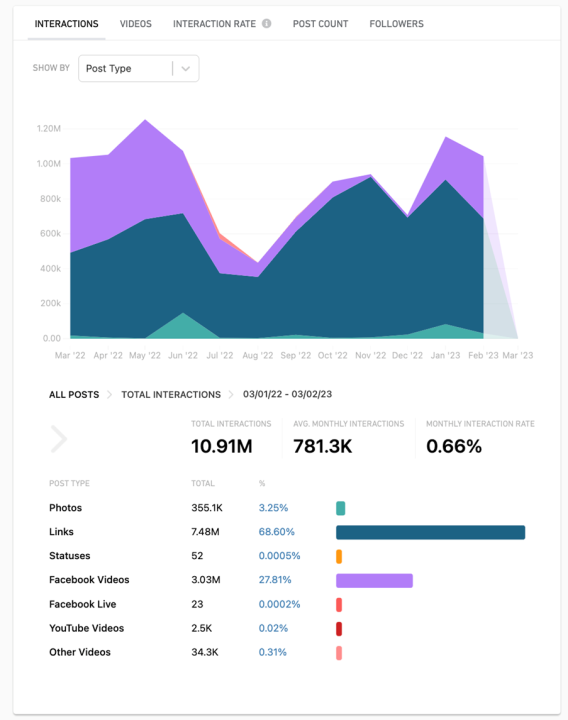

The DFRLab identified twenty-nine Facebook pages involved in this network. Most of the pages were created between 2016-2022 and had a total of 2.1 million followers and 1.5 million likes. Between March 2022 and March 2023, the pages (excluding the official company pages for Avia.ge) garnered more than 10 million interactions with 780,000 average monthly interactions. Using the Meta-owned monitoring platform CrowdTangle, the DFRLab found that 68 percent of the posts were external links to the websites identified as part of the broader network, and 28 percent of the posts included videos that were cut from different entertainment TV programs and series and published as if they were original content. These videos were then cross-posted.

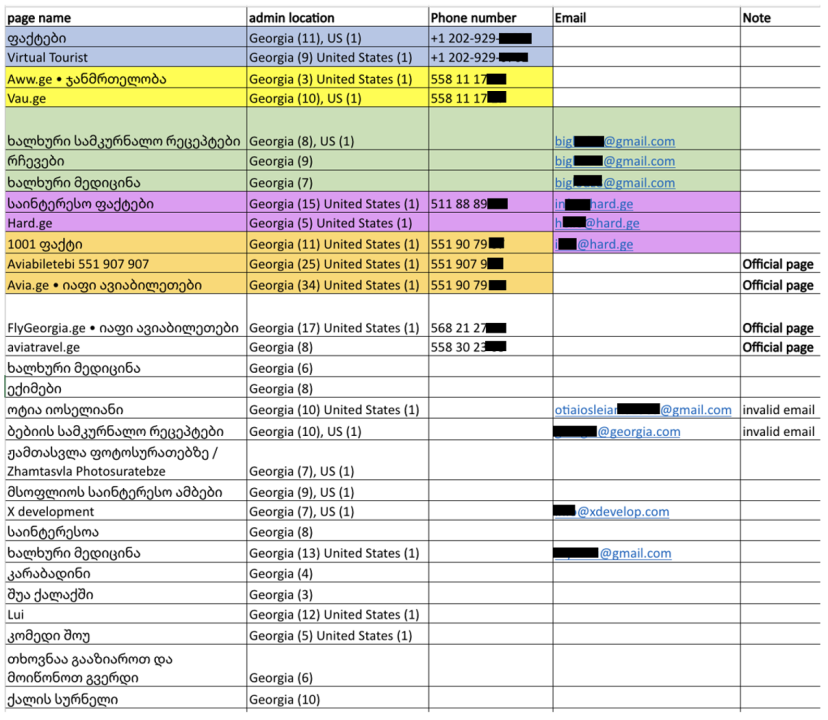

The pages were managed from Georgia and the United States. Nineteen pages from the network, including the official page of Avia.ge, had one administrator based in the United States. The pattern of admin information suggests coordination between these assets. Similarly, the phone number for Avia.ge was also the listed contact information on one of the inauthentic pages, 1001 ფაქტი (“1001 Facts”). Three of the pages also mentioned the same contact email. The DFRLab did a reverse lookup of the email and found dozens of websites associated with it. The websites linked to this network are discussed below.

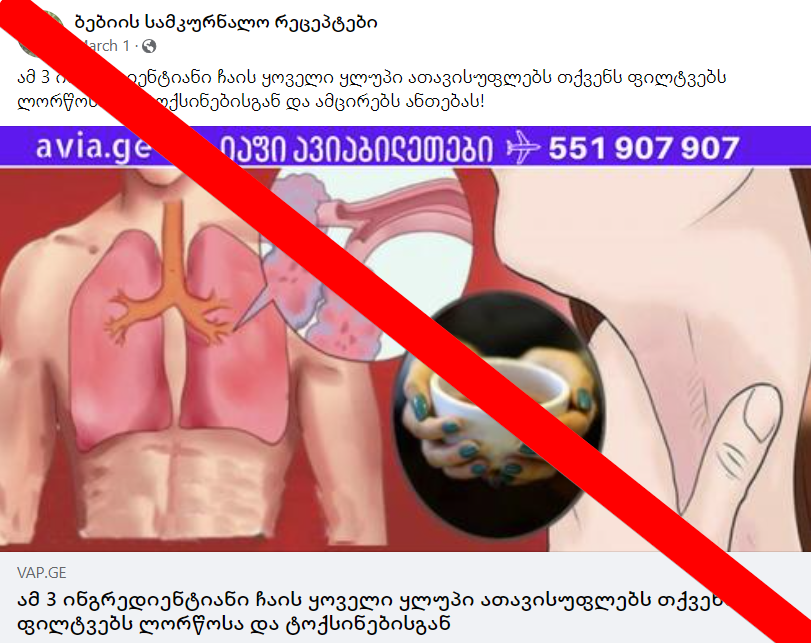

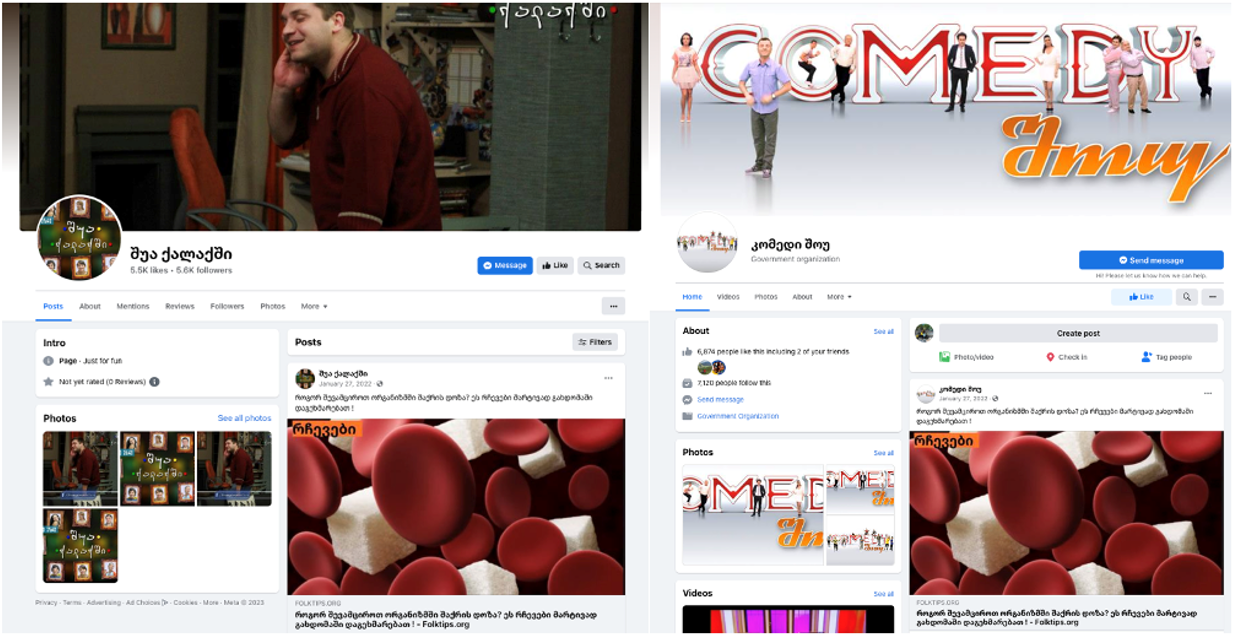

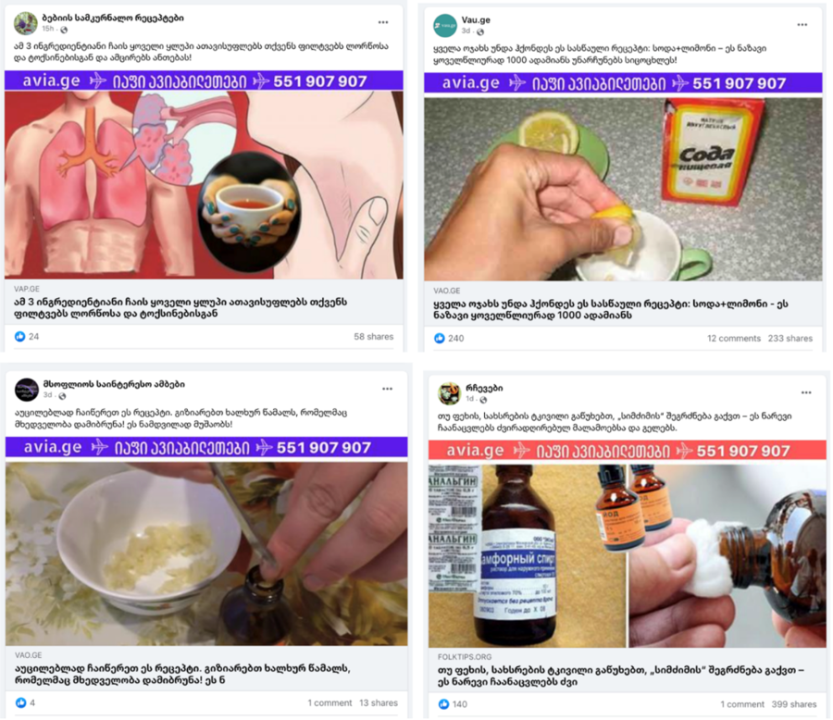

Four pages from this network had different variations of “Avia” in the page names and were solely dedicated to travel and openly promoting Avia.ge. This list includes the official page of the company, Avia.ge • იაფი ავიაბილეთები (“Avia.ge • cheap flights”). Eight pages from the network presented themselves as focused on healing methods or health in general. These pages had names such as Folk medicine, Health, Doctors, and Grandma’s healing methods. Several pages camouflaged themselves as entertainment content and promoted health-related disinformation together with Avia.ge advertisements, including two pages named after popular TV series. They also posted pictures of the characters from the shows to deceive and attract the audience.

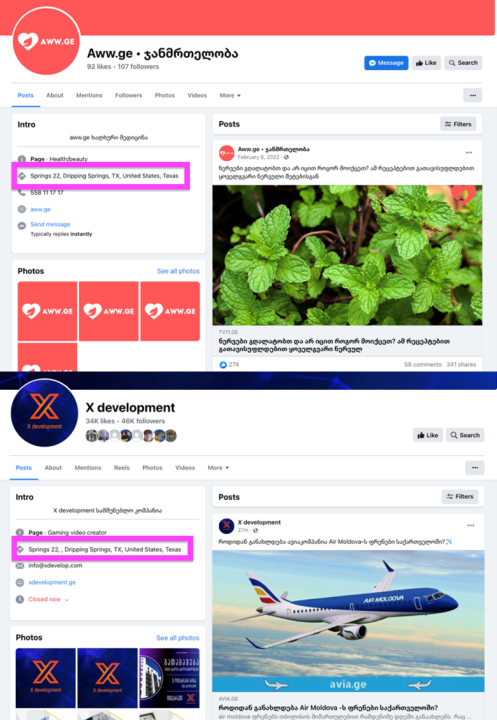

One page, Aww.ge • ჯანმრთელობა (“Aww.ge • Health”), mimicked the pharmacy company and medical center Aversi by appropriating its logo and branding colors. The page also included an address in the US state of Texas, which was also listed on another page in the network, X Development. The latter posed as a development company but predominantly promoted clickbait from the website Brandnews.ge, as well as entertainment content.

Eighteen pages out of twenty-nine predominantly disseminated health-related clickbait and unreliable information. As mentioned above, the posts with links largely directed users to external websites promoting health disinformation and unverified information, mostly focused on folk remedies and alternative medicine. Through the content on the external websites, Facebook users were exposed to Avia.ge advertisements.

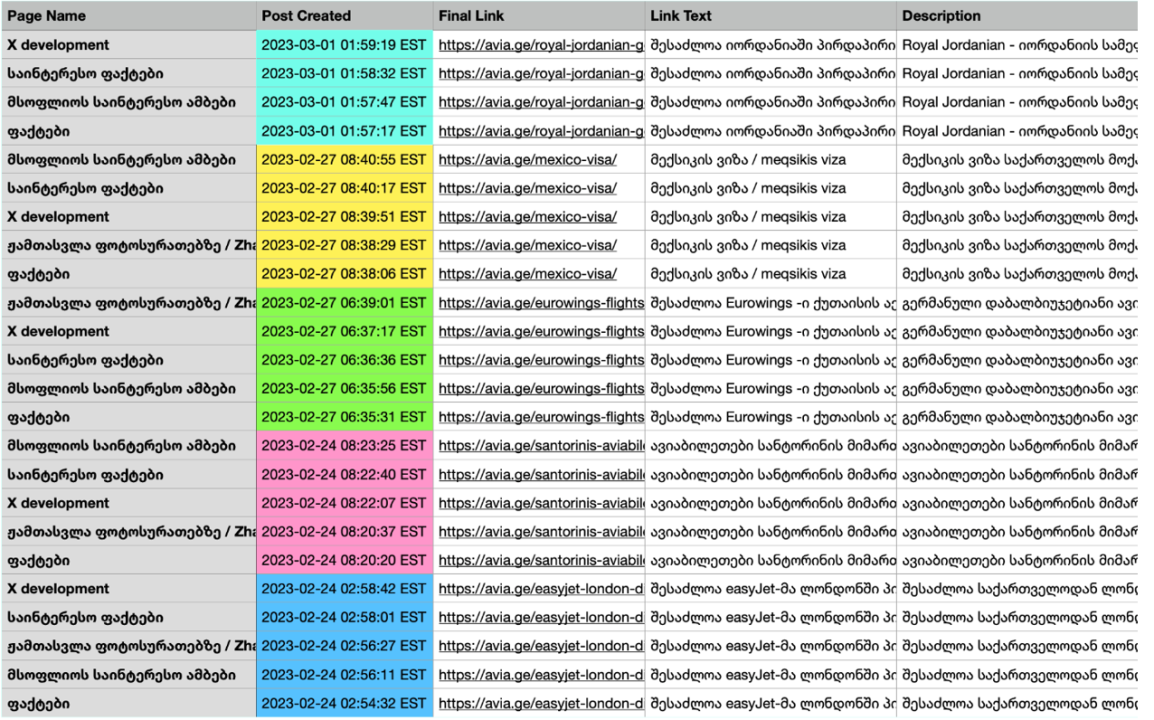

Pages in the network posted the links to the Avia.ge website within a period of minutes on a systematic basis, indicating either a degree of technical coordination or that the pages were centrally managed and linked to Avia.ge.

Facebook groups

The DFRLab also identified nineteen Facebook groups that participated in this network by posting content linking to the network’s websites and related Facebook pages. Some of these groups were created as far back as April 2014, and some appear to have been created in batches.

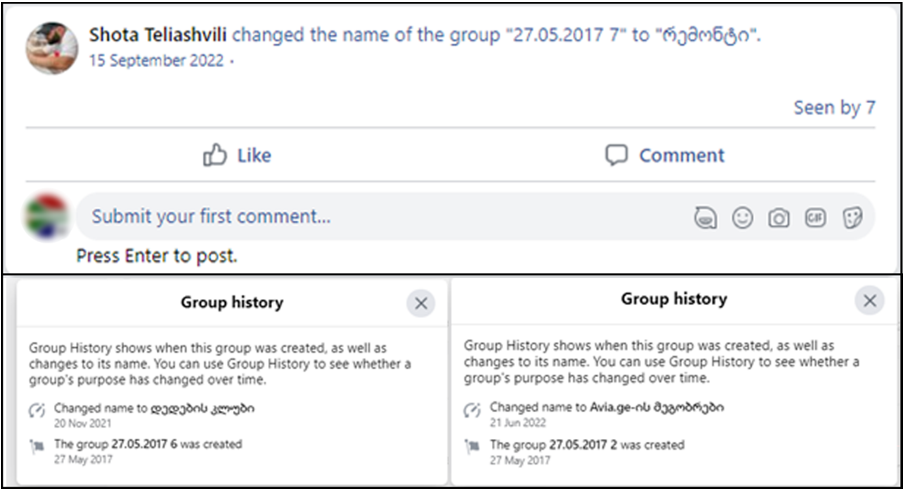

These batch-created groups would use a generic naming convention consisting of the date of creation and an enumerator and would later be renamed to something more topical.

For example, Teliashvili created the group “27.05.2017 7” on May 27, 2017. The same naming convention was used to create several other groups on the same day. On September 15, 2022, Teliashvili renamed the group to the more topical, albeit still generic, რემონტი (“Repair”).

This behavior tracks across other groups created on the same day. The DFRLab identified six groups created on May 27, 2017, but the naming convention Teliashvili used suggests that there are still other groups in this network we have not been able to identify.

Another unifying feature of these Facebook groups was shared administrators. For example, of the eighteen groups that the DFRLab analyzed, seven had some combination of the same thirteen administrator accounts. Although these administrators would be spread differently between the groups, the concentration of the same administrators across all of these groups was another indicator of coordinated behavior between the assets in this network.

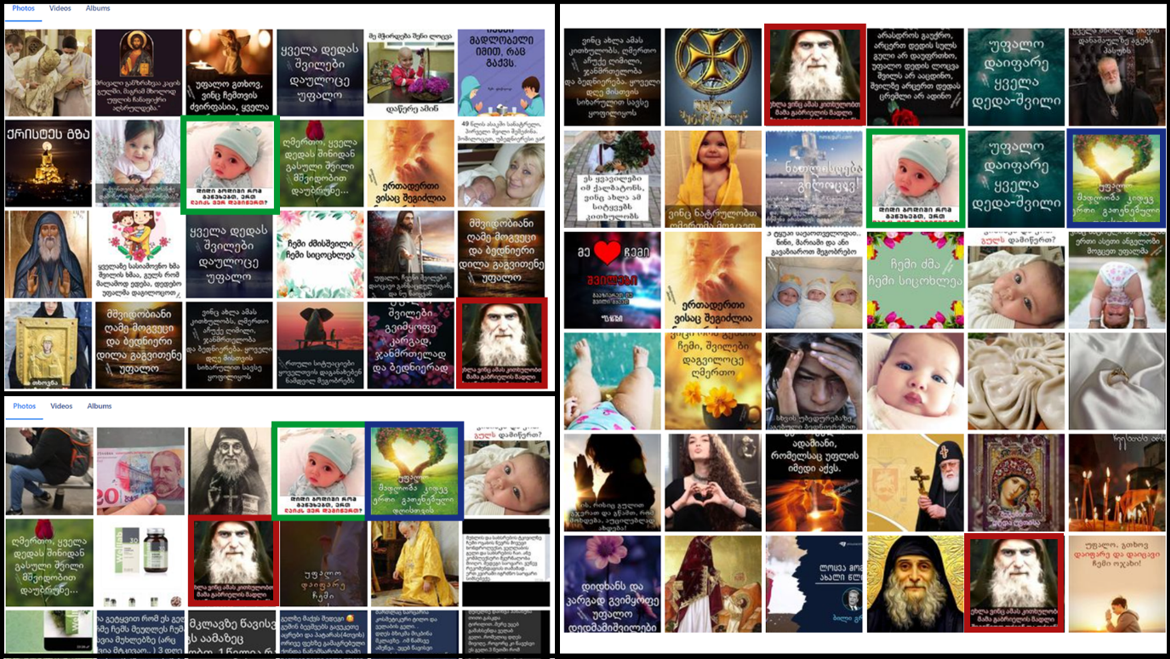

The same content was also posted across multiple groups, in many cases the posts were of religious imagery. It is very possible that these were early attempts to boost the membership of the groups by posting positive content with the intention of luring religious Georgians to join the group.

Facebook accounts

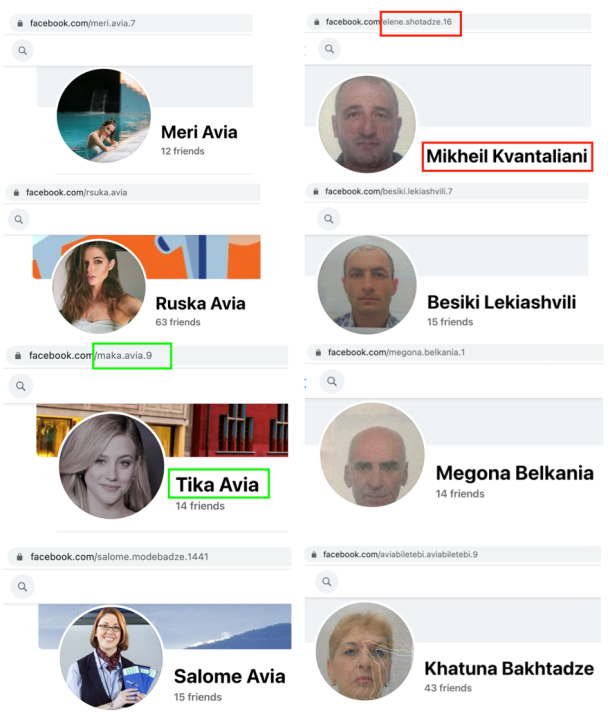

The network also included fifty-two personal accounts, of which forty demonstrated signs of inauthenticity. These accounts managed the groups analyzed above and promoted content from the websites and the pages in this network, as well as Avia.ge. At least thirteen accounts stole pictures of other users or from elsewhere on the internet, one indicator of inauthenticity.

Five accounts from the network demonstrated a similar pattern of using a passport/ID photo as a profile image. Similarly, the usernames of eleven accounts repeated the word “Avia.” The accounts from this list also demonstrated discrepancies between the URL and the profile name, another sign of inauthenticity.

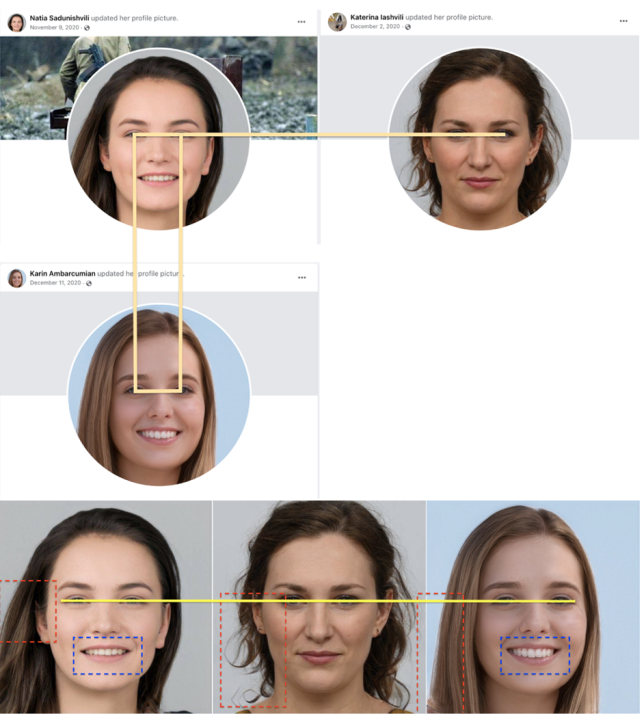

Two accounts also used profile pictures that appear to have been AI-generated. The DFRLab established this by identifying unnatural aspects to the images, such as weird teeth, unusual hair, weird skin, as well as the consistent position of the eyes.

Websites

In addition to the assets found on Facebook, the network also made use of several off-platform websites that used medical clickbait to generate web traffic to sites hosting Avia.ge advertisements. These websites featured spurious and untested cures under sensational headlines and seemed to be designed to draw in users curious about folklore-inspired cures.

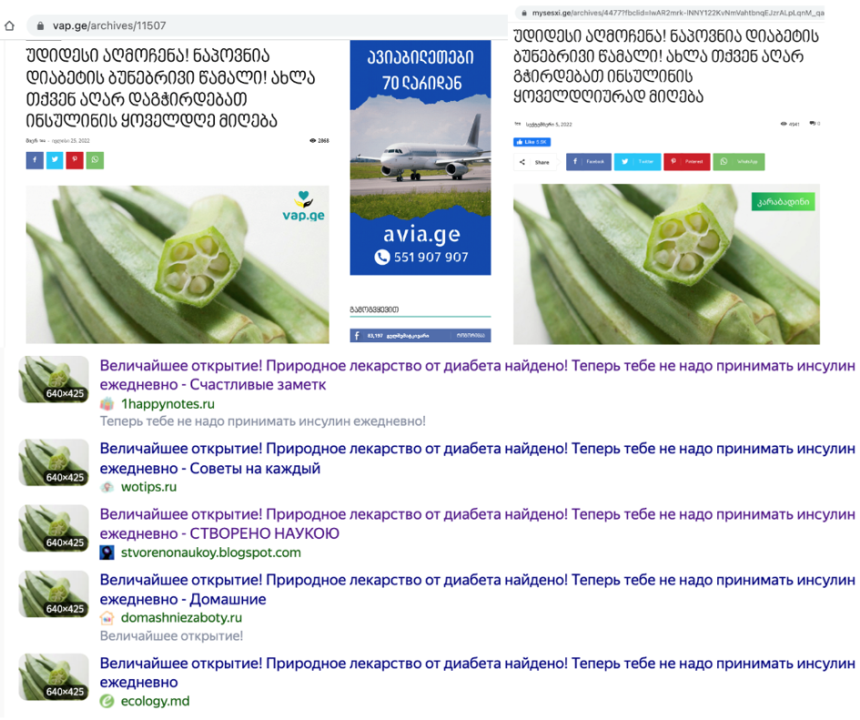

For example, three websites in this network – Folktips.org, Mysesxi.ge, and Vap.ge – promoted content with the identical Georgian headline, “A great discovery! A natural cure for diabetes has been found! Now you no longer need to take insulin every day.”

The articles on the websites are most likely machine-translated, as they contain significant errors incompatible with Georgian grammar and syntax. By reverse image searching the article’s main image, the DFRLab found similar articles originally published in Russian. Plugging the Russian-language version of the article into Google Translate produced a translation with identical wording to that of the Georgian-language version published on the websites in the network.

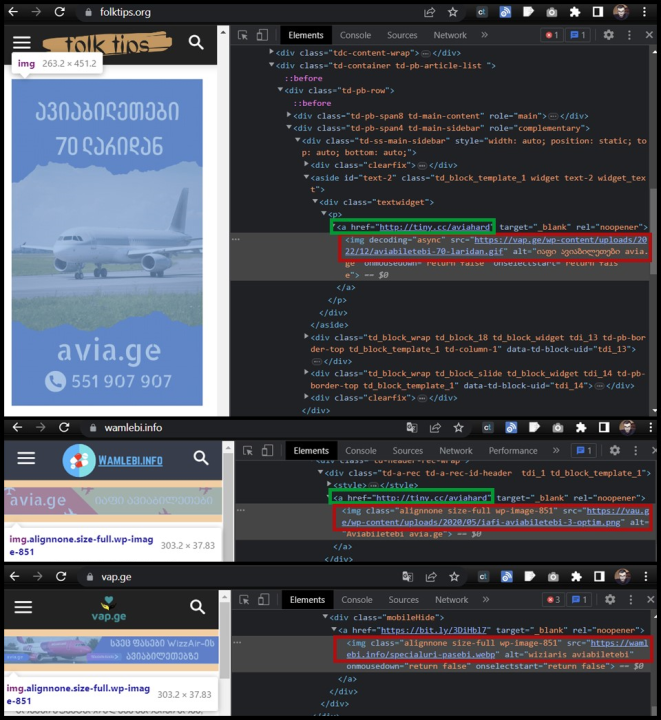

These three websites were part of a network of similar sites that appear to have been created solely for corralling traffic toward Avia.ge’s websites. Besides sharing identical content, at least three of the websites contained prominent banner advertisements promoting Avia.ge on their web pages, but the image files of which were hosted on other websites in the network. For example, the banner advert promoting Avia.ge displayed on folktips.org was actually hosted on vap.ge, and the banner image shown on Wamlebi.info was hosted on Vau.ge.

Another indicator of coordination and centralized management was that the site users are directed to when clicking an image used the same custom shortened URL for several of the images: tiny.cc/aviahard.

In addition, no fewer than seventy-one associated websites used the same Google Analytics code, pointing yet again to possible coordination and management between the sites. In this case, the Google Analytics code (UA-62572792) was used on all seventy-one websites, including folktips.org, mysesxi.ge, vap.ge, and avia.ge itself. The list of sites also featured Adsocial.ge, a social media marketing company for which Teliashvili is listed as the “Digital Manager.”

However, it should be noted that not all of these websites using the same analytics code are necessarily associated with the Avia.ge network, as some might be bona fide clients of Teliashvili’s digital marketing company.

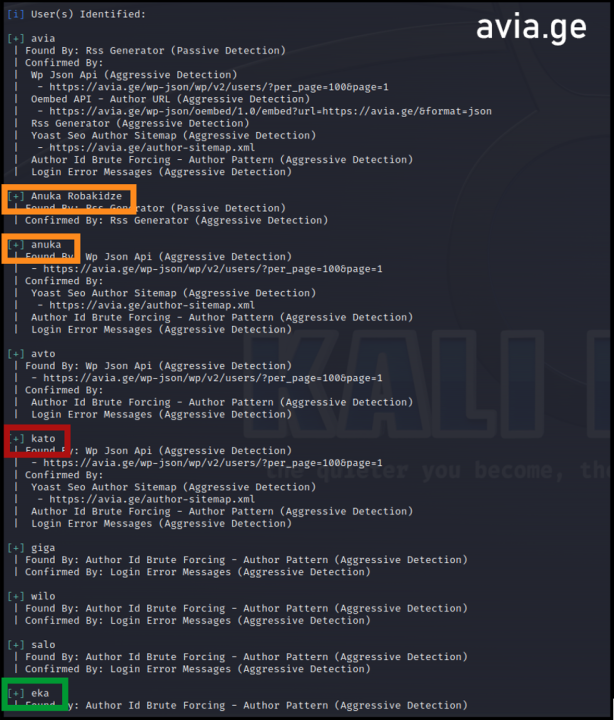

A final association between these sites lies in the user accounts used to access and update the information contained on these websites. Since the sites use WordPress, a content management platform for websites and blogs, certain tools can identify the user accounts used to log into the site and upload content. A WPScan analysis of a selection of the sites in the network using WordPress showed multiple overlapping users registered across the sites. Included among these users were names and surnames of individuals that could be traced back to Teliashvili’s personal and business connections, such as to a person who seems to be his girlfriend.

The network also included the website Wamlebi.info (“Medications Info”). Its about section proclaims it to be the “largest database in Georgia about pills and medications” and claims to sell gels and dietary supplements that the DFRLab identified as being manufactured by the Russian herbal company Handel’s Garden. Many of the products listed on the Wamlebi.info website is approved by reputable health authorities or sold in pharmacies or stores. The website requests the phone number and the customer’s name and says a representative will contact the customer for detailed information. Each product has a customer review section. The DFRLab found that the comments are inauthentic, as customers’ photos appear to have been taken from elsewhere on the internet. Also, comments are machine-translated as the language is not compatible with Georgian.

Overall, this network exhibited several indicators of coordination and inauthenticity among websites, user accounts, pages, and groups. The network used tactics previously documented by the DFRLab, including deploying networks of inauthentic assets and machine-translation of content. The network resorted to spreading harmful content on health for financial gain and used disinformation as a marketing strategy to promote company sales. The assets targeted the Georgian audience with potentially harmful, evidence-free health advice, including false treatments, that – if followed – could result in personal risk.

Cite this case study:

Sopo Gelava and Jean Le Roux, “Georgian travel company behind inauthentic network promotes health-related clickbait content,” Digital Forensic Research Lab (DFRLab), October 3, 2023, https://dfrlab.org/2023/10/02/georgian-travel-company-behind-inauthentic-network-promotes-health-related-clickbait-content.