AI-generated YouTube channels co-opt war coverage to farm nearly two billion views

Coordinated network deploys synthetic anchors, fabricated headlines, and LLM-produced scripts to capitalize on audience interest in Russia-Ukraine war.

AI-generated YouTube channels co-opt war coverage to farm nearly two billion views

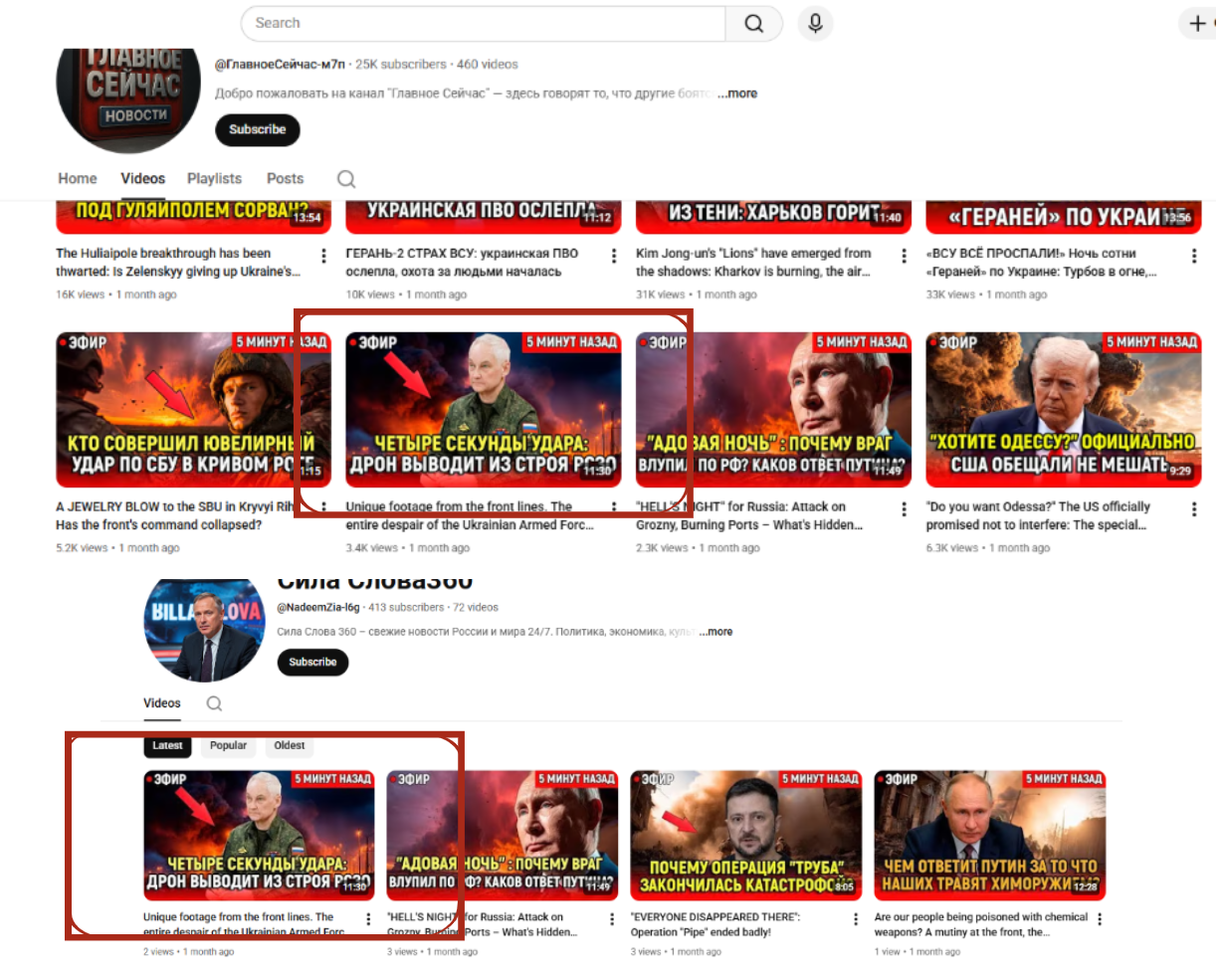

Banner: Stylized collage of screenshots from YouTube channels identified by the DFRLab as part of a coordinated network publishing AI-generated political content about Russia’s war in Ukraine. (Source: YouTube)

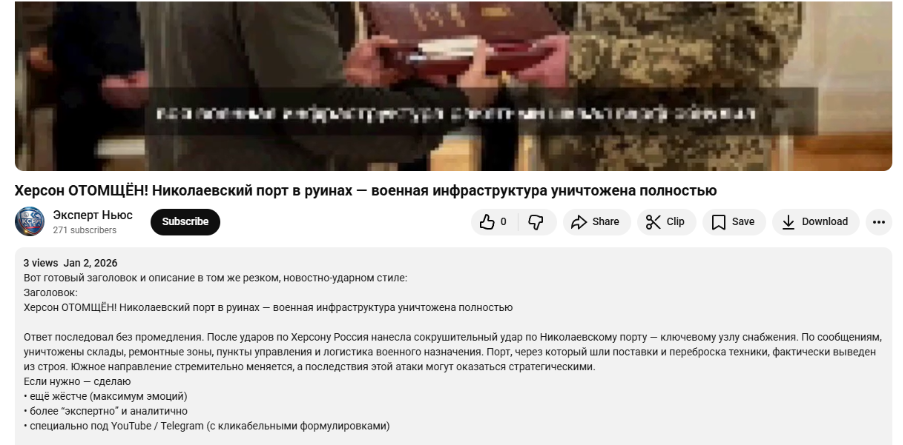

On January 2, 2026, a YouTube channel called Expert News shared a video claiming the Ukrainian port in Mykolaiv has been fully destroyed by a Russian attack. The video had a catchy title, a bright thumbnail, with the channel name suggesting it provided the latest news. However, the destruction alleged in the video never happened. Expert News was just one of the accounts in a network of more than two dozen YouTube channels that have been systematically publishing AI-generated political content that mimics legitimate news reports while intermittently inserting fabricated events. The operation combines synthetic hosts, automated narration, AI-generated visuals, duplicated content, and synchronized posting patterns to produce high volumes of geopolitical commentary at low cost.

Collectively, the channels have accumulated nearly two billion views and close to two million subscribers. While individual channels vary significantly in size, the network as a whole demonstrates the scalability and reach of AI slop: low-quality AI-generated content designed to farm views.

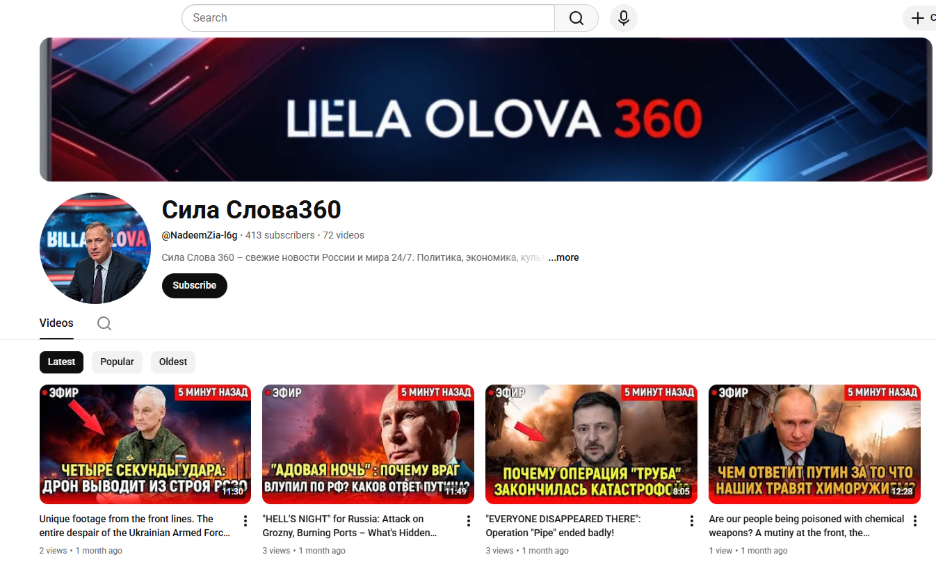

The network comprises clusters of channels that demonstrate multiple indicators of coordination. These include duplicated or near-identical videos published across multiple channels, similar channel naming conventions intended to resemble news outlets, and visually similar profile images, as well as synchronized posting schedules and transitions in thematic focus. Eight channels also exhibited simultaneous pauses in posting followed by synchronized reactivation. The channels consistently employ sensationalist thumbnails, titles, and narration styles to maximize engagement.

A scalable AI content pipeline

The identified network consists of twenty-six YouTube channels publishing political content in English and Russian. The linguistic split indicates targeting of Russian-speaking audiences within Russia and across Europe, as well as English-speaking audiences in European information spaces and internationally. Channel creation dates vary, with several accounts established in clustered waves during the spring and summer of 2025, while others launched years earlier, with the oldest account created in 2007. The accounts created prior to 2025 launched over a varying time span, suggesting organic creation dates and their potential repurposing as content farms in 2025. Activity across the network has been ongoing since the summer of 2025, with sustained publishing patterns observed through the present.

Individual videos range from hundreds to millions of views. Channel subscribership ranged from as low thirteen subscribers at the time of writing to more than one million.

The network relies on heavily automated workflows across the entire content lifecycle. Indicators of AI-generated content appear in scripts, visuals, narration, thumbnails, and descriptions.

Video descriptions on at least one channel contained residual large language model (LLM) response artifacts, including visible framing text that had not been edited out before publication, indicating minimal human oversight.

Additionally, synthetic voiceovers narrate the majority of videos, with five channels featuring AI-generated news anchors positioned behind digital news desks.

Thumbnail imagery and channel banners are similarly automated. Some banner graphics contain nonsensical or garbled text, consistent with generative image artifacts. The uniformity of style across channels suggests template-based production rather than individual editorial control.

The channels do not acknowledge their use of AI, aside from YouTube’s default machine-dubbing label for automated translations. Despite presenting synthetic anchors and fabricated scenes depicting real-world events, the channels provide no indication that the visuals or narration are artificially generated.

Blending fact and fabrication

A defining feature of the network is its integration of factual geopolitical news summaries with entirely fabricated events. Many videos summarize real developments, such as negotiations related to Russia’s war in Ukraine, diplomatic tensions between Armenia and Azerbaijan, unrest in Iran, and US foreign policy news. Interspersed among these are fabricated claims presented with identical production quality and rhetorical framing.

For example, some Ukraine-related videos reported attacks against logistical infrastructure in Mykolaiv and alleged strikes on military infrastructure in the Polish city of Rzeszów—claims unsupported by credible reporting. Other videos suggested imminent diplomatic ruptures between Russia and Azerbaijan, and dramatized the US capture of Venezuelan President Nicolas Maduro with AI-generated footage.

By maintaining stylistic consistency between factual and fabricated segments, the network reduces friction for viewers attempting to distinguish between accurate reporting and invented narratives. This tactic—seeding falsehoods within an otherwise plausible information stream—leverages audience trust built through repetitive exposure to legitimate content.

Summary of coordination indicators

Overall, the channels exhibit multiple overlapping behavioral patterns consistent with coordinated activity.

AI-generated content: As previously noted, the network systematically deploys AI-generated scripts, voiceovers, news anchors, and thumbnail imagery. The presence of residual LLM markers in video descriptions demonstrates that automation extends beyond narration into text production. The integrated use of generative AI tools across scripts, visuals, and audio reduces production costs while enabling high-volume output that mimics professional news formats.

Blending facts with fabrication: By blending accurate geopolitical reporting with fabricated events, the network inserts false claims into an otherwise plausible narrative stream. Fabricated stories are delivered in the same neutral tone and production style as factual updates, increasing the likelihood that audiences interpret them as legitimate reporting. This hybrid approach leverages credibility built through accurate coverage to make misleading narratives more persuasive and harder to detect.

Content reuse and mirroring: Near-identical videos appear across different channels, often with matching thumbnails and scripts. In one instance, two channels—”Сила Слова360″ (“Word Power 360”) and “Главное Сейчас” (“Top Stories Now”)—displayed the same thumbnails, with individual videos published on one channel retaining the branding of the other. This suggests direct reuse of video assets between the two channels, without a clear single source for the content. The repeated recycling of identical content indicates centralized production or a shared content pool, enabling rapid scaling across multiple channels with minimal additional effort.

Synchronized thematic shifts: During the summer and autumn of 2025, several channels emphasized Armenia-Azerbaijan coverage before pivoting in parallel toward Ukraine-focused narratives. More recently, some channels shifted to reporting on unrest in Iran and US-Iran tensions. These synchronized topic transitions, combined with coordinated pauses in posting followed by reactivation across multiple accounts, indicate strategic content timing and sequencing. The pattern is consistent with testing and rotating themes to identify which geopolitical narratives generate the strongest engagement. While many topics presented on the channels followed real world events, the shifts in regional foci were more apparent—for example, channels that rarely spoke about Ukraine and US foreign policy in summer of 2025 would strongly shift their focus to covering Ukraine and the US and abandon coverage of Armenia-Azerbaijan by winter of 2026.

Branding consistency: Channel names frequently incorporate variations of the words “news,” “report,” or “fact” in Russian and English, designed to resemble the branding of real media outlets. Profile images and banners follow similar aesthetic conventions. Thumbnails consistently employ bold typography, dramatic imagery, and emotionally charged framing. This pattern reflects deliberate engagement optimization, using attention-grabbing visuals and authoritative branding cues to increase click-through rates and algorithmic visibility.

Scale and distribution dynamics

At the time of analysis, the 26 identified channels collectively garnered 1,965,892 subscribers. Lifetime views exceeded 1.8 billion, with one channel individually surpassing one billion views, and several others reaching view counts in the millions.

Subscriber distribution varied widely: the smallest channel attracted only thirteen subscribers, while the largest surpassed one million. Despite this disparity, Ukraine-related content collectively achieved significant cumulative views, suggesting that some channels benefited from algorithmic amplification beyond their core subscriber bases.

Individual video views ranged from the single digits to millions. The network’s aggregate scale demonstrates that AI-driven production pipelines can achieve sustained distribution within YouTube’s recommendation ecosystem, even when some individual videos fail to garner views, as the cost per video remains low due to their AI workflow.

While some channels became unavailable as the DFRLab conducted its research, additional channels exhibiting identical AI-production indicators emerged concurrently. As of March 23, 2026, twenty-three of the twenty-six analyzed accounts remained active.

Incentive structures, platform dynamics, and policy implications

It is not possible to determine whether the identified channels are enrolled in the YouTube Partner Program or receive advertising revenue. However, the DFRLab observed advertisements running on their videos, indicating that the content is treated as ad-eligible by the platform. Regardless of direct monetization status, the network operates within an engagement-driven distribution system. AI production workflows lower marginal costs, while clickbait framing and topically synchronized output increase the likelihood of algorithmic amplification. The model demonstrates how synthetic political media, whether factual, fabricated, or somewhere in between, can scale efficiently without requiring high production budgets.

These particular channels potentially violate YouTube’s misinformation policies, particularly where fabricated events are presented as real-world developments. The absence of disclosure regarding synthetic anchors and AI-generated visuals raises transparency concerns under platform rules governing altered or synthetic content.

Under the European Union’s Digital Services Act (DSA), very large online platforms such as YouTube are required to assess and mitigate systemic risks to civic discourse and information integrity. The large-scale dissemination of undisclosed synthetic political media that blends fact and fabrication could engage Articles 34 and 35, which require platforms to assess and mitigate systemic risks, including those arising from automated and coordinated content production. Similarly, recommendation system transparency obligations enshrined under Articles 27 and 38 may also be relevant where algorithmic amplification contributes to widespread distribution.

Cite this case study:

Iryna Adam and Eto Buziashvili, “AI-generated YouTube channels co-opt war coverage to farm nearly two billion views,” Digital Forensic Research Lab (DFRLab), March 23, 2026.